Confused about the difference between REST APIs / HTTP versus Websockets? This is the article for you.

REST / HTTP is a traditional model for communications with backend servers. Sometimes termed the Request / Reply or Request / Response pattern, REST / HTTP relies on a client initiating a request and to a known address and a server responding to such request.

The most common application of REST APIs are to build access points for data stored in a backend database. Clients (often web applications) make calls to this endpoint to request their data, and the server handles the request by calling the database, performing any data transformation or business logic, and returning the result.

This model has worked well for a really long time. Most applications that required internet access used a flavour of REST / HTTP apis to facilitate backend data interaction.

It turns out that this model doesn’t work so well for applications with real time, or near real time data access requirements. Things like a multiplayer gaming application or a web chat application are perfect examples.

In these worlds, clients expect near real time state changes to be communicated to them. For instance, what good would a chatting application be if it takes 30 seconds or more for the recipient to receive my message?

This is where web sockets comes in.

Prefer a video explanation? Check out my YouTube video on REST vs Websockets here.

What are Web Sockets?

Web sockets are a fairly new technology that enables real time communication between client and server. Sometimes called full-duplex or bi-directional communication, websockets allow both client and users to both push and receive messages once a connection is established.

The capability to allow servers to ‘push’ messages into a client is a huge improvement over the traditional REST based model. Using websockets, servers have the ability to notify front-end clients of events as they occur in real time, as opposed to waiting for a client to make a request to the backend server.

There’s a whole bunch of detailed information on web sockets out there, but the goal here is to just remind you of the concepts and the problem web sockets solve for. For some additional reading, you can check out this great article on websockets on the Mozilla Website.

What are REST APIs?

REST/HTTP APIs are much older technology than websockets and are what makes most of the internet tick.

Many of the web pages you visit today including Facebook, Google, Amazon and many other rely on REST APIs to communicate with backend clients.

REST / HTTP relies on the request response model. This means that in order for the client (say a web page) to acquire information, the client must be the party that initiates the request.

This is in contrast to what we say with web sockets. With web sockets, once a connection is established between the two parties, the server is able to initiate requests to transfer data.

It turns out that web sockets are a big improvement over REST, but present challenges in terms of scaling (if not done properly). However, there are many applications in which web sockets are the preferred way of communication between both client and server.

Now that we have a basic understanding of REST/HTTP versus Websockets, lets look at how to use each approach in a practical example.

Example – A Real Time Chatting Application

Imagine for a moment we are trying to build a real time chatting application. Lets visualize this using the below image.

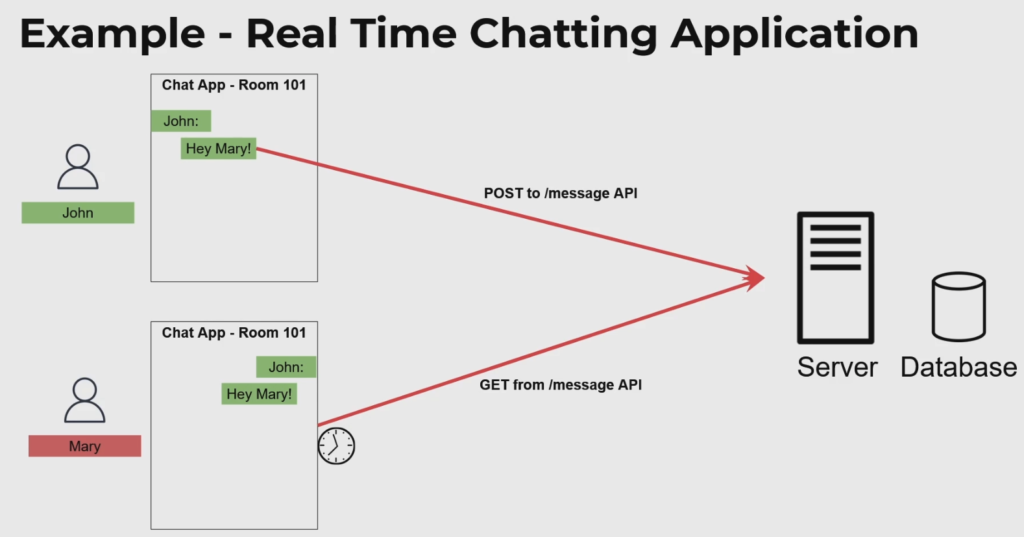

Lets think about how we would model this application with a REST/HTTP API.

In order to do so, we would code up our client program (the front end interface that users interact with) to make periodic backend calls to our server. This could be on a short duration timer such as 5 seconds or so.

In other words, our front end applications will make calls to our backend server (which will store message / room state) and update the front end interface whenever a difference is found.

In this model, both users are guaranteed to get updated snapshots of data as they occur. However, there is an inherent delay in the ‘real timeness’ of the data, a maximum of 5 seconds in this case.

In this example, we used a model called Short Polling which basically entails calling every couple seconds on a timer.

There are numerous problems with this approach including unneccessary load placed on our backend server. Afterall, if we have hundreds of thousands of users interacting with our applications, there’s goign to be a whole lot of redundant calls made to our backend even when there has been no data updates. This isn’t good.

A slightly better approach is to use Long Polling. Long polling involves the client making a request for data to the backend, but the backend not responding immediately. The backend will hold the request until a new update occurs in its database.

Behind the scenes, the backend server will spin a thread (often on a short lived timer) and periodically query the database for updates. When an update is detected, it will respond with the content back to the client application, and the data will be visualized on the UI.

Afterwards, the whole process repeats – the caller makes another request, and the backend spins its wheels until a new message is received.

Long Polling is a slightly better approach than short pollign with some performance and scaling improvements, but it still presents a scaling bottleneck on our database layer. We’ve simply just shifted the problem from being the responsibility of the client, to now being the responsibility of the server. Better, but not the best.

In either of these two models, short polling or long polling, you’ll have a flow that looks a little something like this:

Now, With Web Sockets…

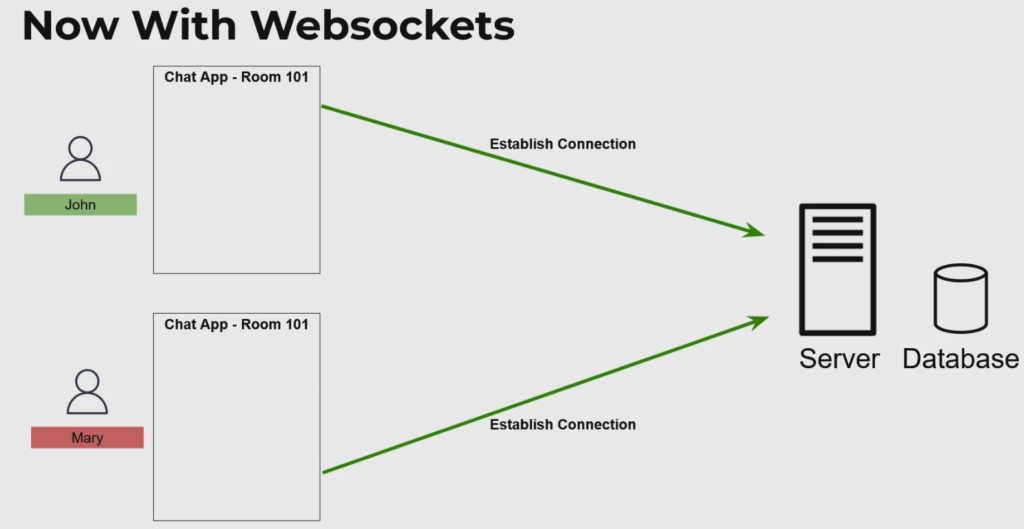

With web sockets, the flow is similar but different. The first step is for the client and server to establish a connection to one another. In this example, both John and Mary’s instance of their front-end establishes a connection with the backend server as seen below.

The backend server (depending on which websocket implementation you use) will keep track of the connection state among both clients.

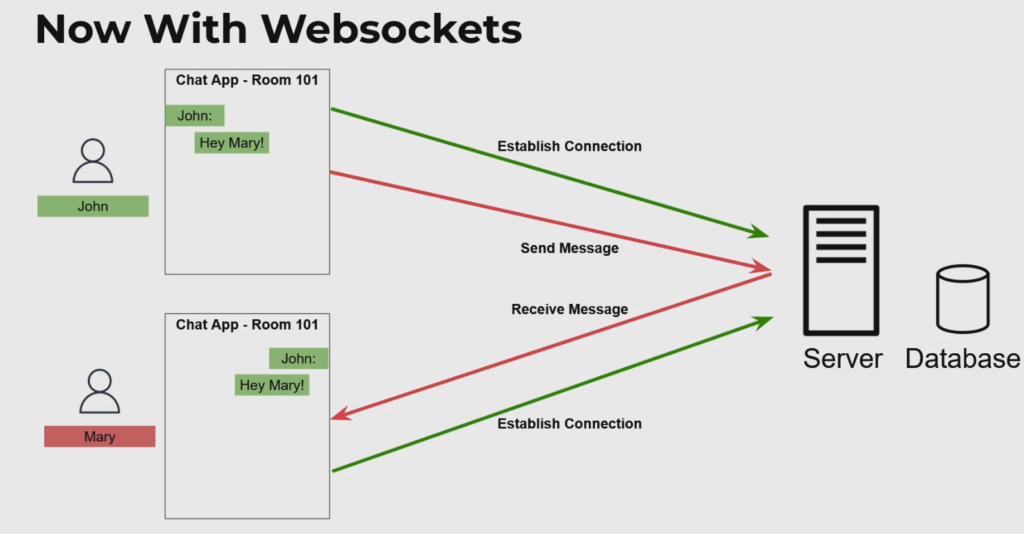

Now, when John attempts to send a message, he will first push the message into the backend server. The backend server will store the message in an internal database (optional, but preferred), and push the message back to the recipient – in this case Mary.

The end to end flow can be visualized in this diagram:

And thats about it!

As a next step, you can check out my step by step tutorial on setting up websockets on AWS in a completely serverless model.